In case you missed my article on honeypots, you can read here first explaining what there are for and how to install the open-source project honeypot, OpenCanary. If you followed the demonstration and deployed an instance of OpenCanary some logs have been generated by now. You’ll probably want to perform analysis on the data.

If you are looking for the location of these logs, they are located at:

/var/tmp/opencanary.logAnalyzing the log file

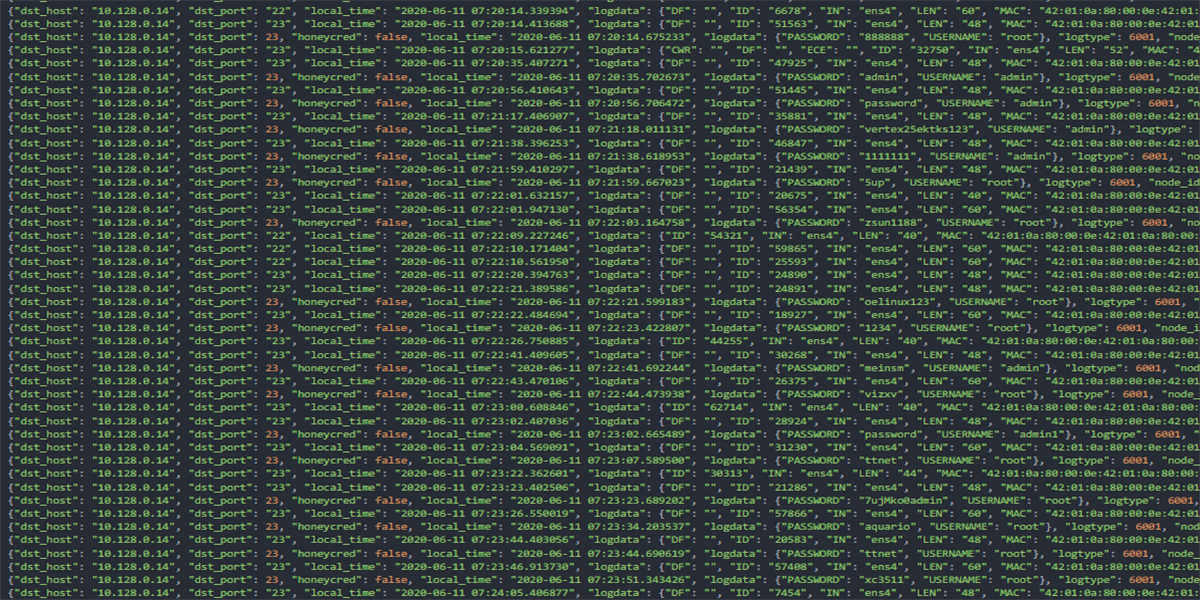

Opening up the log file (see featured image) you will find a wealth of information to start researching. The good thing is that it appears to be in JSON or JavaScript Object Notation. If you are familiar with JSON you know that although it is in human readable form, it is also structured in a way that modern programming languages can easily convert to datatypes to work with in applications.

I was going to mine this data with C# but since OpenCanary is written in Python, we’ll be consistent and use Python instead. Python has a JSON package in its standard library and we can use it to parse the data into a Python data structure.

import json

with open("opencanary.log", "r") as f:

json_data = json.dumps(f)Unfortunately for OpenCanary logs, they are not structured to valid JSON. You’ll be met with a TypeError.

Traceback (most recent call last):

...

TypeError: Object of type TextIOWrapper is not JSON serializableThe problem is OpenCanary outputs events to the log as a dictionary object for each line. It doesn’t take into consideration that it isn’t valid JSON. It is easy to fix, however. You just need to add the beginning and closing brackets to validate the dictionary entries into a proper list in JSON. We want to parse a lot of logs and this isn’t convenient to have to do this to every file first. So let’s factor this into our Python script.

[ <------ add beginning square bracket

{"dst_host": "", "dst_port": -1, "local_time": "2020-06-08 04:49:06.473372", "logdata"}

{"dst_host": "", "dst_port": -1, "local_time": "2020-06-08 04:49:08.204443", "logdata"}

/// entire log file

{"dst_host": "", "dst_port": -1, "local_time": "2020-06-08 05:03:09.830600", "logdata"}

{"dst_host": "", "dst_port": -1, "local_time": "2020-06-08 05:03:11.170285", "logdata"}

{"dst_host": "", "dst_port": -1, "local_time": "2020-06-08 05:03:12.336498", "logdata"}

] <------ add closing square bracketYou will also run into inconsistencies in the information log type. Looking at these two entries with the same log type, their data structure is different. We have to provide logic to catch the starting log that has that different structure. None other than the starting information entry seems to have this issue.

{"dst_host": "", "dst_port": -1, "local_time": "2020-06-08 04:49:08.204443", "logdata": {"msg": "Canary running!!!"}, "logtype": 1001, "node_id": "****", "src_host": "", "src_port": -1}

{"dst_host": "", "dst_port": -1, "local_time": "2020-06-08 04:49:06.473372", "logdata": {"msg": {"logdata": "Added service from class CanaryFTP in opencanary.modules.ftp to fake"}}, "logtype": 1001, "node_id": "****", "src_host": "", "src_port": -1}Now that we have the major bugs identified we can start building our script so we can preform custom analysis on the data. The following is how we can build a framework to perform complex research into OpenCanary logs.

Setting up the data mining script

Open up a new Python file. Name it whatever you would like. Once you have it opening in your favorite editor, add the following. To perform JSON parsing, we will need to import the json module. We want to get the host name of our attackers so we need to import the socket module to perform reverse IP checking. Then declare the variables we are going to use. We need some constants for the log type id numbers that OpenCanary uses for its event identification. Then we create several lists. One to hold all of them and the others to sort the events into identifiable lists for specific analysis.

# filename: log_parse.py

import json

import socket

CANARY_FILE = "opencanary.log"

INFORMATION_LOG = 1001

BRUTE_FORCE_LOG = 6001

PORT_SCAN_LOG = 5001

HTTP_SCAN_LOG = 3000

log_data = []

information_logs = []

bruteforce_logs = []

portscan_logs = []

http_logs = []So we can see the layout of our script, here is the main function. In essence, we want to open and parse the JSON log file, sort the events by category, and print the information we want from the data.

def main():

parse_logfile(CANARY_FILE)

sort_totals()

print("[*] Log totals\n")

print_information_logs()

print_bruteforce_logs()

print_portscan_logs()

print_http_logs()

print_bruteforce_analytics()

if __name__ == "__main__":

main()Correctly parsing the JSON

First we open the log file. Since we have that problem with invalid JSON, we simply take each line by line and convert the dictionary object into our log_data list. By passing the need to be apart of a larger list of dictionaries data structure like when loaded as a whole file. That was easy. Now we have our data represented in Python.

def parse_logfile(file_path):

with open(file_path, "r", encoding="utf-8") as f:

for line in f:

log_data.append(json.loads(line))We successfully loaded the list of event objects but it is in a huge list. Let’s sort them into our categories and leave the data intact. You may want to manipulate that data as the records are the timeline of all the events. That may be useful. Since our log data is represented in Python datatypes now, we can iterate over the log_data list and sort by the events id number. If it matches one of our constants, it will be put into the property list category.

def sort_totals():

for a in log_data:

if a["logtype"] == INFORMATION_LOG:

information_logs.append(a)

elif a["logtype"] == BRUTE_FORCE_LOG:

bruteforce_logs.append(a)

elif a["logtype"] == PORT_SCAN_LOG:

portscan_logs.append(a)

else:

http_logs.append(a)Pretty printing basic information

It could be that we don’t want to perform specific methods on the data but want an easier way to read display of the basic information for each type of event. The following are some print methods that extract that information and print to terminal.

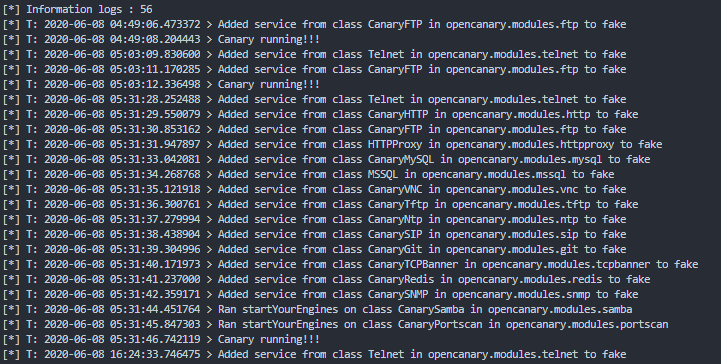

Here we can print the information logs, selecting only the local_time, and msg variables. Keep in mind that this is where the inconsistencies arise for the datatypes. On the “Canary Running” event, that record has a slightly different datatype than the other information events. If you don’t have this conditional the script will break unfortunately.

Here we get a total of these types of logs and iterates over each log in console.

def print_information_logs():

print(f"[*] Information logs : {len(information_logs)}")

for info in information_logs:

if info['logdata']['msg'] == "Canary running!!!":

print(f"[*] T: {info['local_time']} > {info['logdata']['msg']}")

else:

print(f"[*] T: {info['local_time']} > {info['logdata']['msg']['logdata']}")

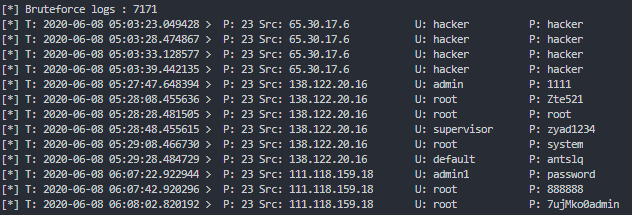

For brute-force logs; we want the time, destination port that is being hit on the server, the source of the attack, and the credentials they tried to use on the honeypot. Here is the method for extracting that data. For some reason, Python didn’t allow for accessing nested dictionaries in this case so we have to extract the credentials used into a second variable. We also use some alignment techniques in the string interpolation to make the output readable.

Now we have all the unauthorized access attempts on the honeypot.

def print_bruteforce_logs():

print(f"[*] Bruteforce logs : {len(bruteforce_logs)}")

for brute in bruteforce_logs:

creds = brute['logdata']

print("[*] T: {0:25} > P: {1} Src: {2:20} U: {3:15} P: {4:15}"

.format(brute['local_time'],

brute['dst_port'],

brute['src_host'],

creds['USERNAME'],

creds['PASSWORD']))

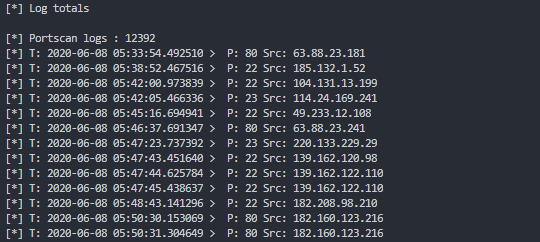

If you configured your honeypot to listen on all ports, you should be able to trace all port scans against the server.

We want the time, destination port, and the source of the scan.

def print_portscan_logs():

print(f"[*] Portscan logs : {len(portscan_logs)}")

for scan in portscan_logs:

print("[*] T: {0:25} > P: {1} Src: {2:20}"

.format(scan['local_time'],

scan['dst_port'],

scan['src_host']))

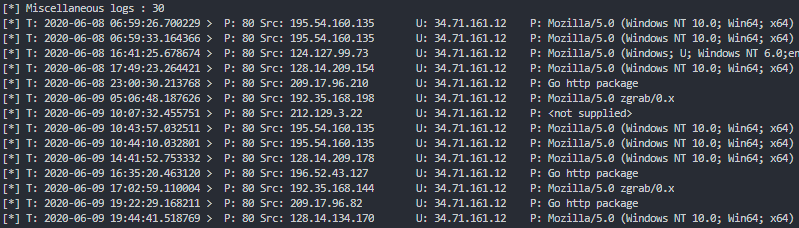

This last information displaying method tracks all the port scans done on port 80, the typical port for accessing a website on the internet. We can even pull the host name and user agent string to see if a spider may be trying to crawl our honeypot. Typical and usually not malicious.

def print_http_logs():

print(f"[*] Miscellaneous logs : {len(http_logs)}")

for http in http_logs:

print("[*] T: {0:25} > P: {1} Src: {2:20} U: {3:15} P: {4:15}"

.format(http['local_time'],

http['dst_port'],

http['src_host'],

http['logdata']['HOSTNAME'],

http['logdata']['USERAGENT']))

Performing dynamic analysis on the data.

There is a framework in place now. We can extend this to perform dynamic analysis on the data.

- What if we want to get just the list of the IP address running brute-force attacks on us?

- Perhaps it’s important to our research to know the passwords being used in common day attacks.

- Could we do a reverse IP check on the IP addresses to get a host name?

Of course we can! That is the beauty of being able to represent the logs in Python data types. We can now create any method we want to get the analysis we are looking for. This last method ends the demonstration but this will give you some ideas on how to utilize complex calculations, sorting functions, or reaching out to the internet and pulling back correlating information for an even clearer picture.

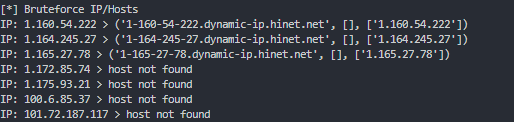

Here we iterate over the brute-force events, and collect the list of IP addresses attacking us, as well as a list of the credentials used. Once they are sorted alphabetically, we run a reverse IP check on the IP address so we can potentially get a hostname connecting the IP address to a DNS name. Then print out its results as well as the username/password combinations being use.

def print_bruteforce_analytics():

list_of_ips = []

usernames = []

passwords = []

for brute in bruteforce_logs:

creds = brute['logdata']

if brute['src_host'] not in list_of_ips:

list_of_ips.append(brute['src_host'])

if creds["USERNAME"] not in usernames:

usernames.append(creds['USERNAME'])

if creds['PASSWORD'] not in passwords:

passwords.append(creds['PASSWORD'])

list_of_ips.sort()

usernames.sort()

passwords.sort()

print("[*] Bruteforce IP/Hosts")

for ip in list_of_ips:

try:

hostname = socket.gethostbyaddr(ip)

except:

hostname = 'host not found'

print(f"IP: {ip} > {hostname}")

print("[*] Usernames used")

for username in usernames:

print(username)

print("[*] Passwords used")

for password in passwords:

print(password)

Wrap up

Performing research isn’t always straight forward. Sometime you have to really dig in to get the results you want. If you were not familiar with the Python language before this, you can now see the power of this scripting language. And hopefully this helps you in overcoming the difficulties of parsing invalid JSON data so you can leverage your data for even more unique results. If you want the whole Python script, you can access it as a Gist on my Github account.

Comments are closed, but trackbacks and pingbacks are open.